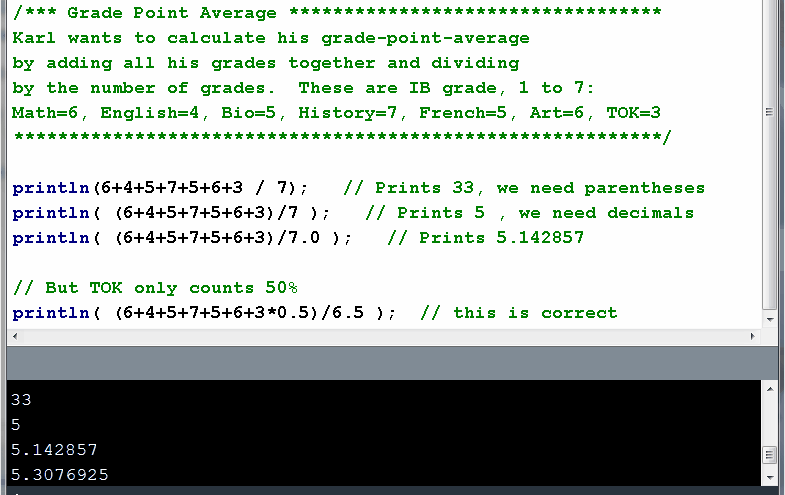

Grade-Point-Average

Download Source Code

In Java, any number written without a decimal point is assumed to be an

integer (int) value.

As soon as there is a decimal point written, the number is a floating

point (float) value.

Usually a double value is better than an int

value, because: